Why CaraOne’s results are so much better than other products, when scaling big

Semantic search revolutionized the way we find assets. Be it texts, soundbites, or video clips, the ability to search by meaning fundamentally changed workflows in content retrieval and generation. Semantic search feels like magic. You look for a vague concept, and the AI returns exactly what you meant.

But hidden in every semantic search and RAG system is an unavoidable disaster: they don’t scale. Not in terms of database performance, but in terms of result quality. Paradoxically, the more assets you add to your vector database, the worse the retrieval becomes. In demos and PoCs with a few thousand items, the answers look incredible. But in production, when you index millions, you discover the problem: the system serves up the same mediocre results again and again, while the most relevant knowledge remains hidden. RAGs degrade by scaling.

At scale, semantic search engines spam you with the same results over and over, while the assets you actually need get buried under piles of useless noise, as if certain assets keep pushing themselves to the front – like annoying participants with inflated egos in a discussion – talking loudly about everything that comes up without really contributing anything useful. And the more assets you add, the higher the chance that you coincidentally invite one of these “annoying participants,” who will then keep showing up in the results again and again.

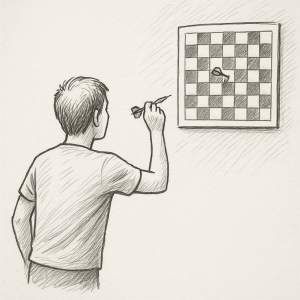

Why does this happen? The issue is deeply mathematical. It’s about the shape of the vector space (these semantic databases) RAGs store their assets in. Imagine playing darts, but instead of a dartboard, you hang a chessboard on the wall and throw your darts at it. You aim for E3, then F6, then D7. Every square is the same size, equally reachable. That’s how an ideal retrieval system should work: every asset has the same chance of being found.

But real vector spaces aren’t chessboards. They look more like actual dartboards. Some targets, like the bullseye, are so tiny they’re almost impossible to hit. Others, especially the big wedges on the outer rim, are enormous and catch dart after dart, even the ones not aimed at them. No matter how carefully you aim, you keep hitting the same few oversized areas, just as semantic search keeps surfacing the same mediocre assets. The bullseye, the precise knowledge you’re actually looking for, is effectively almost unreachable. At ObviousFuture, we solved this with a lot of mathematical research.

With CaraOne, we give the models glasses. Special mathematical lenses that correct the distortion in the vector space. With these glasses on, the warped dartboard is transformed back into a clean chessboard. Every asset regains its rightful square, every result gets an equal chance to show up, and retrieval stays fair whether you’re working with five thousand assets or fifty million. CaraOne keeps retrieval balanced, stable, and linear at any scale.

Too many customers relying on semantic search have already been misled and set up for disappointment. They saw the perfect demo, they ran an amazing PoC on a small set of assets, and they invested, only to discover months later, when they indexed their full archive, that results degraded, repetition set in, and retrieval quality collapsed. Users are frustrated, seeing the same assets resurface again and again, while the real treasures in their storage stays hidden.

With CaraOne, that story ends. Semantic search finally works in the real world, across massive archives. We fix the broken promises of semantic search and RAG applications. And we invite you to test it. Run a large-scale PoC, side by side: compare CaraOne with any other product. You’ll see the difference in retrieval quality yourself, thanks to our custom models that correct distorted vector spaces and turn them into a fair playing field. Finally, you’ll get the results others only promised you: the ones you are actually looking for.